Our BERT encoder is the pretrained BERT-base encoder from the masked language modeling task (Devlin et at., 2018). Jigsaw Unintended Bias in Toxicity Classification. Lesser data: BERT is trained on the BooksCorpus (800M words) and Wikipedia (2,500M words).

#Bert finetune how to#

For more information about how to configure experiments, check out the Determined experiment configuration documentation.

For most of the people, using BERT is synonymous to using the version.

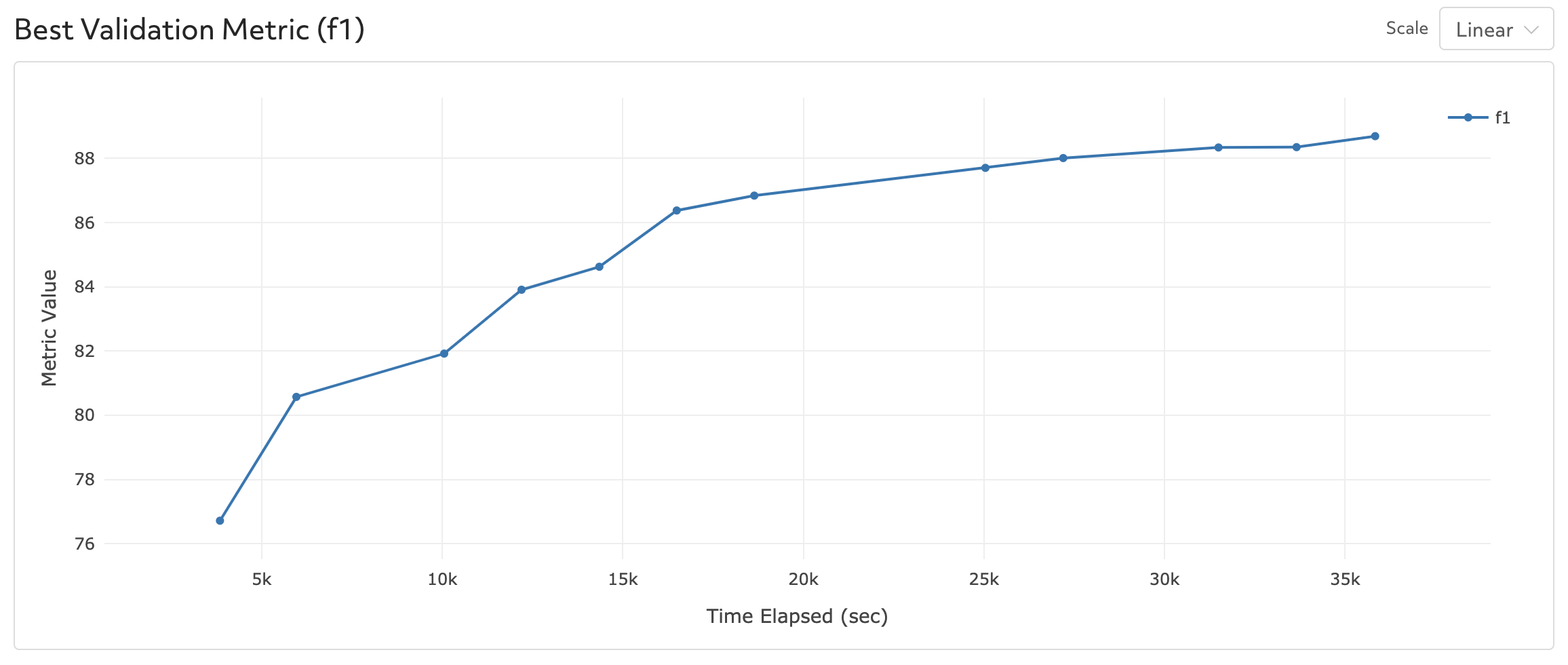

If you want to modify the experiment, say to modify hyperparameters or the duration of training, you can easily make changes to this file. In the tutorial, we fine-tune a German GPT-2 from the Huggingface model hub. I have only labeled 120 job descriptions with entities such as skills, diploma. We will provide the data in IOB format contained in a TSV file then convert to spaCy JSON format. Description : Bert_SQuAD_PyTorch hyperparameters : global_batch_size : 12 learning_rate : 3e-5 lr_scheduler_epoch_freq : 1 adam_epsilon : 1e-8 weight_decay : 0 num_warmup_steps : 0 max_seq_length : 384 doc_stride : 128 max_query_length : 64 n_best_size : 20 max_answer_length : 30 null_score_diff_threshold : 0.0 max_grad_norm : 1.0 num_training_steps : 15000 searcher : name : single metric : f1 max_length : records : 180000 smaller_is_better : false min_validation_period : records : 12000 data : pretrained_model_name : " bert-base-uncased" download_data : False task : " SQuAD1.1" entrypoint : model_def:BertSQuADPyTorch To fine-tune BERT using spaCy 3, we need to provide training and dev data in the spaCy 3 JSON format ( see here) which will be then converted to a.